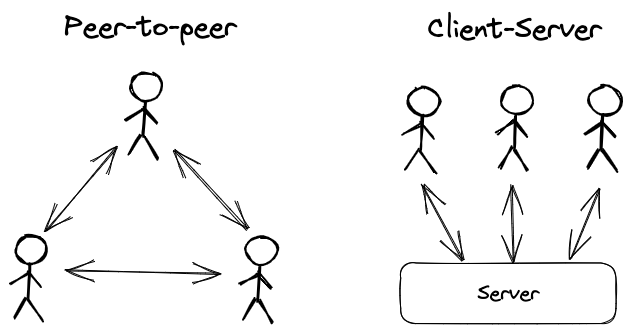

Peer-to-peer vs client-server architecture for multiplayer games

An important decision developers have to decide up front when developing a multiplayer game is whether to use a peer-to-peer architecture or a client-server architecture.

In a peer-to-peer setup, clients directly communicate with each other. With client-server, all communications go through a centralized server layer.

The Hathora framework uses a client-server architecture for its applications. In this article we'll explore some of the differences between peer-to-peer and client-server across several dimensions, and we'll explain why we chose the client-server paradigm for Hathora.

Denial of Service

In peer-to-peer setups clients are aware of the IP addresses of the other clients they are connected with. It is therefore possible to initiate a denial of service attack against a peer which can be difficult to mitigate.

With a client-server setup, client IPs are kept hidden from each other but the server IP is public. While clients can't be targeted directly, denial of service attacks can still be launched against the server. Thankfully, there are known techniques to detect and mitigate server-side denial of service attacks.

Hathora Cloud has DDoS protection built into its network by default so that developers don't need to worry about it.

Cheating

In the peer-to-peer architecture there is no neutral authority that governs the game, so trust has to be placed on one or more clients in the network. This makes it extremely difficult to prevent cheating by a motivated malicious client since they can manipulate data via network communications.

In contrast, the server is able to act as the central authority in a client-server architecture. Hathora uses a server authoritative model and places no trust on the clients, always validating their actions server-side.

Stability

In theory, a peer-to-peer network can be very resilient because no single node in the network is necessary for the network to function as a whole. However, in practice peer-to-peer setups often designate a single peer to act as the "host" through which communications go through. If the host leaves the network or has an underpowered machine / unstable internet connection (not uncommon considering these are client devices) it can cause issues in gameplay.

With client-server, the server is the central point of failure for all clients. If the server goes down or has stability issues that will translate into downtime or instability for multiplayer functionality on the clients.

Hathora Cloud uses a highly available server-side architecture so that if a server goes down, another one can take its place without causing significant disruption for clients.

Scale

Peer-to-peer implementations generally have trouble handling high player counts because the network and compute requirements end up being too high to handle on client devices at larger scale. However, the advantage with this architecture is that there are no shared resources amongst multiple game instances, providing a built in sharding mechanism.

Client-server architectures benefit from having dedicated server machines to scale to more players in a room, but ultimately there is a limit to how many users you can have interacting with each other concurrently in realtime. Servers additionally need to address the sharding problem, since all game instances go through the same compute infrastructure.

Hathora Cloud has built in auto-scaling and can spin up or down servers based on usage. It uses a coordinator to route users to the appropriate server based on their game instance, so there is no theoretical limit to the number of game instances that can be running concurrently.

Latency

If clients are in close proximity to each other, peer-to-peer networks can offer decent latency as network packets don't have to travel far. However, the network infrastructure that peer-to-peer connections get routed through ("last mile internet") typically have slower upload speeds and higher packet loss, ultimately driving up latency.

Client-server setups have access to better network infrastructure via datacenter connections, but their latency ultimately depends on server proximity to the users. For example, your game only has a single server in the US, but a group of friends in Singapore want to play together, all their traffic will be routed through the US, incurring a perceptible (200ms+ each way) latency penalty.

Hathora Cloud addresses the server latency issue by having a global network of servers and dynamically allocating game instances to servers based on the geographic distribution of connected users. This is an extension of a technique known as "edge computing", something commonly used by CDNs to cache static content closer to users so that they can access it faster.

Cost

One of the biggest reasons developers cite for picking peer-to-peer is cost. Because a peer-to-peer architecture can run entirely on client devices, the developer doesn't need to pay for or maintain any infrastructure of their own. In contrast, a client-server architecture requires the developer to procure and maintain a server to facilitate gameplay.

Developers don't want to dish out money when they don't know whether their game will be successful or not, and they also don't want to be stuck maintaining their server in perpetuity. That's why Hathora Cloud completely manages the server infrastructure for you, and also offers a generous free tier to allow you to host your game at no cost in perpetuity up to a certain number of concurrent users.

Summary

The client-server model introduces upfront challenges for a developer, so it’s natural to for a new developer to have a bias towards the peer-to-peer architecture. However, the client-server architecture has a higher ceiling on gameplay experience for end users. Hathora Cloud was specifically built to address the various challenges of client-server, leaving the developer with all the upside and none of the difficulty.