Scalable WebSocket Architecture

The web has rapidly evolved from its origins of websites serving static HTML content, to today's highly dynamic web applications. Whether it's games, chat, or productivity tools, users expect the apps to be up-to-date and enable real-time interactions with others.

WebSockets, which enables real-time communication from within web browsers, is one of the central pieces that has enabled this level of interactivity. And yet, despite the wide spread adoption of WebSockets, surprisingly little has been written about effective ways to implement them at scale. Most current efforts try to retrofit techniques proven to scale traditional request/response applications (stateless) to real-time/streaming use cases (stateful), leading to architectural complexity and limitations.

In this post, we will explore potential architectures for a typical realtime application: a turn-based multiplayer game. We'll start first with the commonly recommended architecture from the massively popular WebSocket library Socket.IO, and then we'll propose an alternative architecture and detail some of its benefits.

Architecture Options

Setup

We are designing a backend system for a real-time turn-based game similar to online Chess or Monopoly. Users are grouped into sessions or "rooms" together, where users within a room interact with each other and there can be many concurrent rooms at any given time (in the chess example, a live chess match corresponds to an active room). To support a large number of concurrent rooms, we'd like a horizontally scalable architecture where adding additional game servers increases the number of concurrent rooms/players that our system can support.

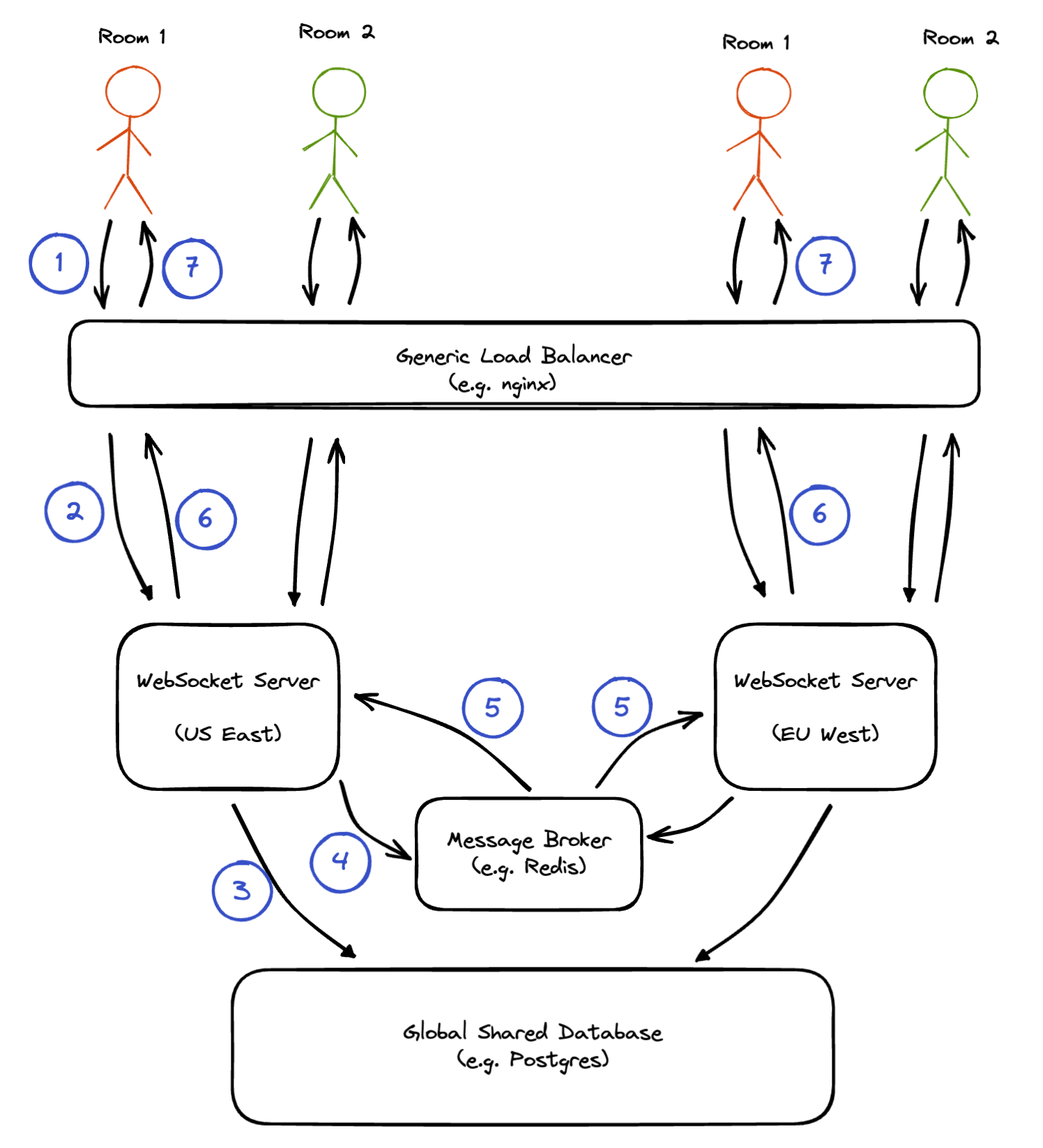

Traditional Socket.IO architecture

The most commonly cited architecture for scalable WebSocket backends can be found on the Socket.IO documentation. Players are routed via a generic load balancer to one of many available server instances, and the server instances communicate with each other via a message broker. State is maintained in a globally shared database.

Data Flow:

- A user from US East sends a message (e.g. a chess move) over a WebSocket connection scoped to a particular room/session (e.g.

wss://example.com/room1). The connection is established with a load balancer like nginx, HAProxy, Amazon CloudFront, etc. - The load balancer forwards the messages to a custom WebSocket server. When initially establishing the connection, the load balancer has to choose which WebSocket server instance to forward the connection to. To reduce latency, it should ideally pick one in the closest region to the user (using something like GeoDNS).

- The WebSocket server processes the message and issues a write query to a globally available database system like Postgres in order to update the game state. The database operation must be atomic to account for the fact that writes may also be coming from other WebSocket servers.

- The WebSocket server sends the game state update (e.g. the current chessboard position) to a message broker like Redis or RabbitMQ so that users connected to other WebSocket servers can receive the update.

- The message broker broadcasts the state update to all connected WebSocket servers.

- The WebSocket servers forward the state update (via the load balancer) to all WebSocket connections for the relevant room.

- The load balancer forwards the update over the client WebSocket connection.

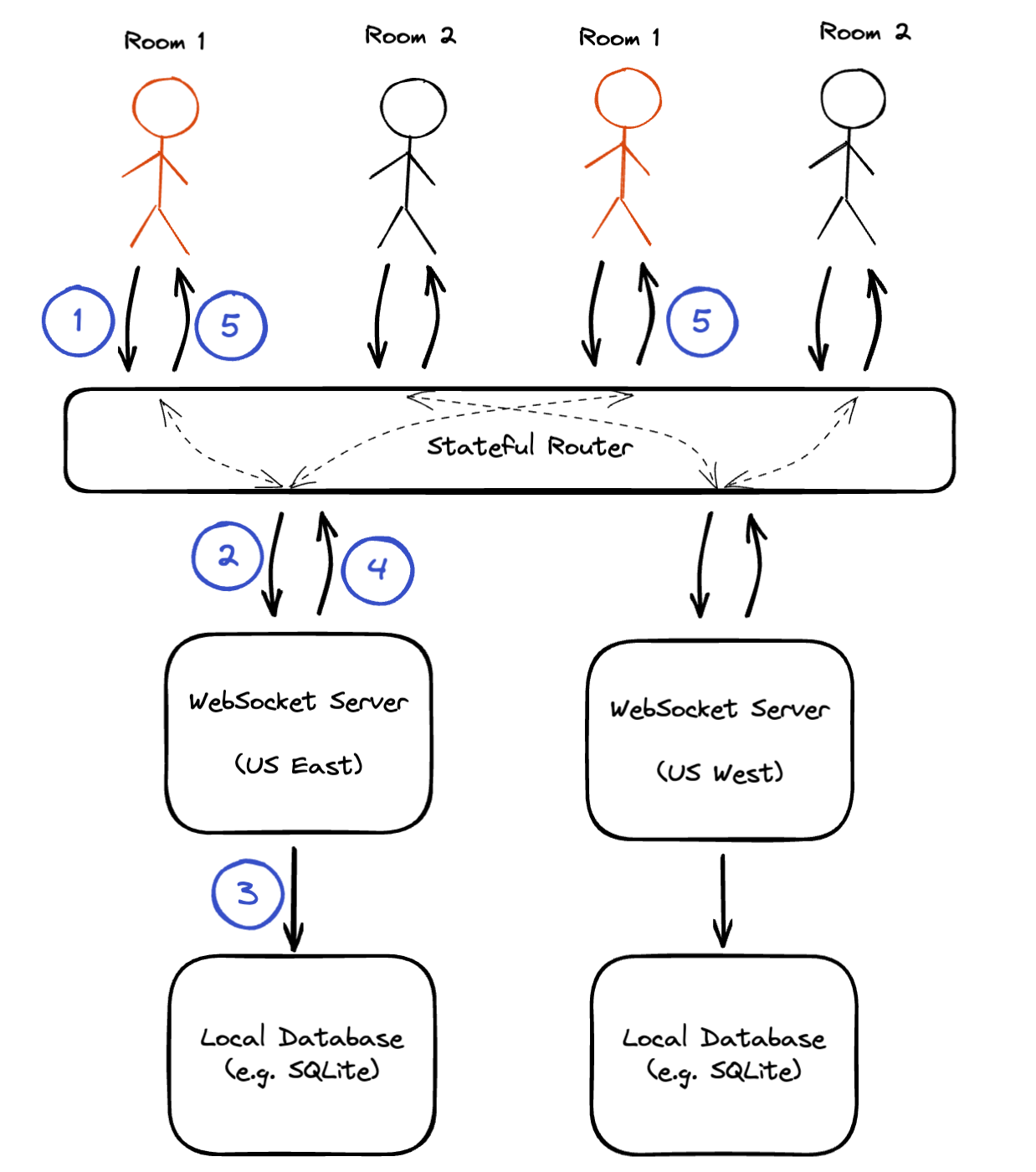

Architecture using a Stateful Router

This is an alternative architecture which relies on a specialized stateful proxy to group users to server instances based on their room. This "colocation" of users localizes data to the server instance and eliminates the need for a separate message broker.

Data Flow:

- A user from US East sends a message over a WebSocket connection scoped to a particular room/session (e.g.

wss://example.com/room1). The connection is established with the Stateful Router. - The proxy forwards the messages to a custom WebSocket server. When initially establishing the connection, the proxy selects a WebSocket server for the requested room based on proximity to the user. For all subsequent connections to this roomId, the Stateful Router uses its internal map to route the connection to the same WebSocket server.

- The WebSocket server processes the message and issues a write query to a database in order to update the game state. Note that because all messages in the room are processed by a singular server, this database can be local to this server – this can be as simple as updating some in-memory data structure inside the server process, or a locally embedded database like SQLite/RocksDB.

- The WebSocket server sends the game state update to the proxy.

- The Stateful Router broadcasts the state update to either one or optionally all connected WebSocket clients in the room.

Advantages of Stateful Router

Migrating from a message-broker based architecture to one utilizing a Stateful Router offers a number of advantages:

1) Fewer messages (i.e. improved scalability)

Before: Each WebSocket server needs to process every state update, filter messages addressed to connected clients and forward it along. Thus the scalability of the system is limited by the maximum throughput of a single server for processing state updates.

After: Only one server needs to process the messages and state updates for a given room. As long as the largest/busiest room can be handled on a single server, this architecture is infinitely scalable.

2) Reduced server load (via connection multiplexing)

Before: Load balancers and servers maintain dedicated WebSocket connections for each client connected to them. This consumes resources (CPU, memory, file descriptors) on the servers.

After: Servers only have a single connection to the Stateful Router. The proxy combines all incoming and outgoing messages over this single connection – this technique is known as connection multiplexing and it serves to shield the servers from concurrent user load. No more worries about file description limit and memory constaints.

3) Data locality (via client colocation)

Before: Servers write to a shared database and need to ensure a data consistency model (e.g. via atomic write operations or CRDTs).

After: The data for a room is local to the server, allowing use of local in-memory or embedded databases – consistency becomes trivial. Additionally, if a persistence layer is used, it would only need consistency guarantees within a geography, without having to worry about global replication lag.

Hathora

At Hathora, our mission is to make it easier for developers to build, launch, and scale multiplayer games. One of the core technologies we have built is the Hathora Coordinator, which is our fully managed multi-tenant implementation of a Stateful Router.

Hathora Coordinator

An overview of the Hathora Coordinator and how it's used to power multiplayer games can be found in the Hathora documentation. In addition to the stateful routing functionality described in this article, the Hathora Coordinator also implements a few other key features:

Edge networking

To minimize latency, it's important to accept and process traffic closest to where users are (we wrote a blog post going into more detail about this). The Hathora Coordinator is deployed across 8+ regions globally and accepts traffic at the edge before routing it to the right server instance.

Transports

We talked about WebSockets in this article, but the same techniques apply to other persistent transport types as well. The Hathora Coordinator also supports direct TCP connections, with UDP and WebTransport support coming soon. These connections are handled entirely by the Coordinator in a way such that your servers don't know or care about which transport type the clients originally connected with, greatly simplifying your servers networking code.

Analytics

Since all traffic goes through the coordinator, it has access to important information like the number of concurrent connections over time, which geographies the connections originate from, the average connection duration, etc. Having access to this information can be invaluable for understanding your user base and engagement.

If you want to stay up to date on Hathora developments, join our Discord community.